Why should students know about kappa value?

Posted on 13th May 2016 by Tran Quang Hung

“Looking at a mammogram is conceptually different from looking at images elsewhere in the body. Everything else has anatomy – anatomy that essentially looks the same from one person to the next. But we don’t have that kind of standardized information on the breast. The most difficult decision I think anybody needs to make when we’re confronted with a patient is: Is this person normal? And we have to decide that without a pattern that is reasonably stable from individual to individual, and sometimes even without a pattern that is the same from the left side to the right.”

(Malcolm Gladwell, What the dog saw)

You may have encountered this confusing situation before, when a chest X-ray yields disagreement among clinicians. Some say they see infiltrates on the radiography. Others deny that.

So puzzling, isn’t it?

You may not know which side to be on, especially when you are just a young medical student, or just a newbie physician. With little experience, you’re left feeling stuck.

In statistics, we have a concept for such situations. It is called the kappa value.

Simply put, kappa value measures how often multiple clinicians, examining the same patients (or the same imaging results), agree that a particular finding is present or absent.

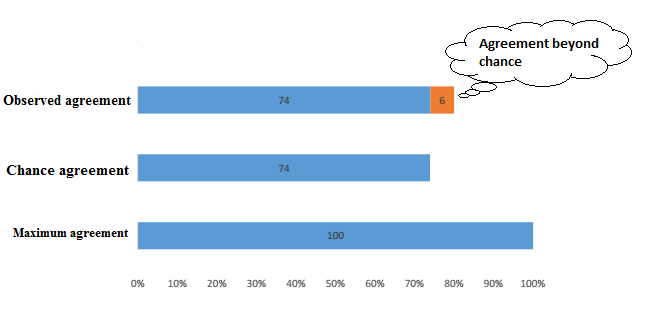

More technically, the role of the kappa value is to assess how much the observers agree beyond the agreement that is expected by chance.

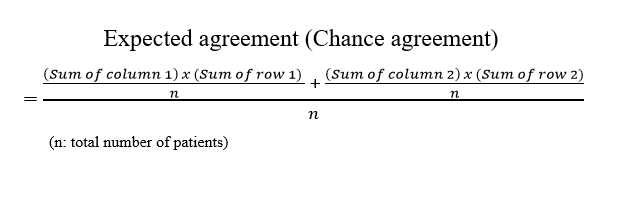

You may wonder “What does “beyond the agreement that is expected by chance” mean?” Ok. Let’s take a look at the example below, and you will get a better insight into the definition.

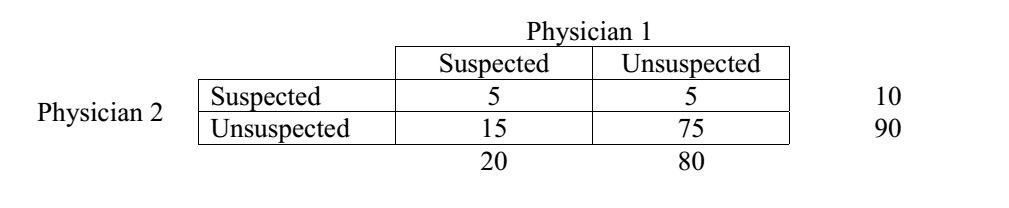

Two radiologists independently read the same 100 mammograms. Reader 1 suspects malignancy in 20 patients, and rules out malignancy in 80 patients. Reader 2 suspects malignancy in 10 patients, and rules out malignancy in 90 patients.

The table above depicts the observations of two readers.

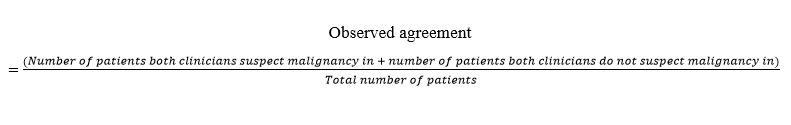

Both agree that malignancy is suspected in 5 patients and is ruled out in 75 patients. So, observed agreement is (5 + 75)/100 = 80%.

By chance alone, they would have agreed about the suspected malignancy in (10 x 20)/100 patients (= 2 patients) and agreed about there being nothing-abnormal-detected in (90 x 80)/100 patients (= 72 patients). So chance agreement (or expected agreement) = (2 + 72)/100 = 74%. The kappa value in this case = (80% – 74%)/(100% – 74%) = 0.23. (You can find the formula for working this out yourself a bit further down this page).

By convention, a kappa value of 0.23 signifies a fair agreement between physicians.

- Kappa value 0-0.2 => slight agreement

- Kappa value 0.2-0.4 => fair agreement

- Kappa value 0.4-0.6 => moderate agreement

- Kappa value 0.6-0.8 => substantial agreement

- Kappa value 0.8-1.0 => almost perfect agreement

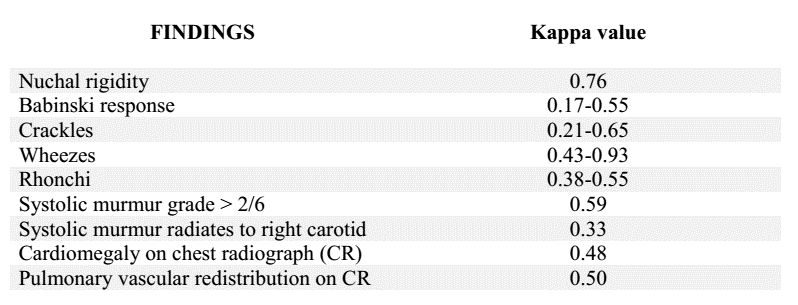

Kappa value of pulmonary infiltrates on chest X-ray is 0.38 [2], which signifies a fair agreement between clinicians.

Kappa values of some familiar diagnostic findings [2]

What does the kappa value tell us about medicine?

1/ Lots of physical examinations and imaging findings have a less-than-optimal kappa value.

There are several reasons for that. Maybe the definition of the diagnostic finding is vague or ambiguous. Maybe the clinicians’ techniques are flawed. Maybe the clinicians are inattentive while practicing physical examinations. Or maybe the clinicians’ conclusions are misled by expectation.

Keep in mind that a lot of factors can affect clinical judgement. Just try to avoid them.

2/ Some physical examination techniques are associated with subjectivity.

Let’s take the example of jaundice detection. To naked eyes, how “yellow” is indeed yellow? There is no exact criterion for that. Clinical studies reveal that only 70-80% observers detect jaundice when the serum bilirubin (a yellow pigment in the blood) exceeds 2.5-3 mg/dL. Even when bilirubin exceeds 10 mg/dL, the sensitivity of examination is only about 83% [2]. So, whenever you suspect an increased bilirubin, you should order a bilirubin measurement, instead of just relying on subjective impression.

Remember to use wisely the weapons in hand.

3/ Practicing medicine is like doing photography. Every physician comes at things from his or her own angle.

Even with laboratory tests, which present clinicians with a single, indisputable number, disagreement between clinicians is still possible, and even common [2].

In one study, three endocrinologists reviewed the same thyroid function tests and other clinical data of 55 outpatients with suspected thyroid disease. The endocrinologists disagreed about the final diagnosis 40% of the time [3].

When building your way of interpreting medical data, just make sure that it has an evidence-based foundation. Intuition sometimes does a great job, but no one can be sure that it will lead to no harm.

REFERENCES

1. McGinn T, et al. Tips for teachers of evidence-based medicine: 3. Understanding and calculating kappa. CMAJ. 2004;171(11):1369–1373.

2. Steven McGee. Evidence-based physical diagnosis. Philadelphia: Saunders Elsevier; 2012. 3rd edition.

3. Jarlov AE, Nygaard B, Hegedus L, et al. Observer variation in the clinical and laboratory evaluation of patients with thyroid dysfunction and goiter. Thyroid. 1998;8(5):393-398.

All of the images in this blog have been created by the blog author.

Read more of Tran’s blogs here…

Efficacy of drugs: 3 examples to get you to truly understand Number Needed to Treat (NNT)

How did they determine diagnostic thresholds: the stories of anemia and diabetes

Key to statistical result interpretation: P-value in plain English

Surrogate endpoints: pitfalls of easier questions

![Tran - Kappa formula[1]](https://static.s4be.cochrane.org/wp-content/uploads/2016/05/Tran-Kappa-formula1.png)

No Comments on Why should students know about kappa value?

Perfect, as always

11th March 2021 at 7:10 pmHi,

29th November 2019 at 6:46 amyour all steps to solve kappa value is very helpful.

Why not showing the CI, it seems a very important

11th June 2016 at 7:36 pmYup, you are right, Doc toc. The 95% CI should be alongside the calculated results. But in this post I focus on the meaning of kappa value, rather than any specific value, so I just leave the CIs out. Too many details can mess things up.

Thanks for reading and commenting :)

17th June 2016 at 9:23 am